Pliops, an Israeli startup that develops data processors for cloud and enterprise data centers, closed a $100 million Series D funding round led by previous investor Koch Disruptive Technologies (KDT). This brings the total investment in Pilops to more than $200 million.

In February 2021, Pliops raised $65 million from companies like video graphics leader Nvidia and the Koch Industries.

Will you offer us a hand? Every gift, regardless of size, fuels our future.

Your critical contribution enables us to maintain our independence from shareholders or wealthy owners, allowing us to keep up reporting without bias. It means we can continue to make Jewish Business News available to everyone.

You can support us for as little as $1 via PayPal at office@jewishbusinessnews.com.

Thank you.

Check Point explains that a data center is a facility that provides shared access to applications and data using a complex network, compute, and storage infrastructure. Industry standards exist to assist in designing, constructing, and maintaining data center facilities and infrastructures to ensure the data is both secure and highly available.

Everything is going into the cloud these days. More and more software companies are offering their programs as online software as a service. Services like Google Docs have always been online services. And more firms move their data into cloud services because this offers the advantage of not needing to invest in your own servers.

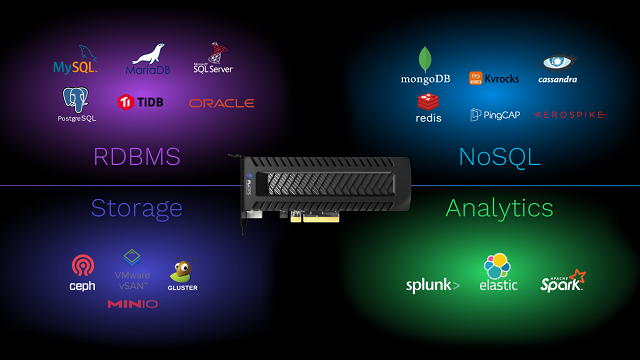

Founded in 2017 by flash storage industry veterans from Samsung like Uri Beitler, M-Systems and XtremIO, Pliops says that it is creating a new category of product. The company enables cloud and enterprise data centers to access data up to 50X faster with 1/10th of the computational load and power consumption. Its technology collapses multiple inefficient layers into one ultra-fast device based on a groundbreaking patent-pending approach. Pliops boasts that its solution can solve the scalability challenges raised by the “cloud data explosion” and the increasing data requirements of AI and ML applications.

Pliops’ Storage Processor (PSP) is a hardware-based storage accelerator that enables cloud and enterprise customers to offload and accelerate data-intensive workloads using just a fraction of the computational load and power.

“It became clear that today’s data needs are incompatible with yesterday’s data center architecture. Massive data growth has collided with legacy compute and storage shortcomings, creating slowdowns in computing, storage bottlenecks and diminishing networking efficiency,” Beitler told TechCrunch. “While CPU performance is increasing, it’s not keeping up, especially where accelerated performance is critical. Adding more infrastructure often proves to be cost prohibitive and hard to manage. As a result, organizations are looking for solutions that free CPUs from computationally intensive storage tasks.”