YouTube, like its parent company Google, utilizes complicated algorithms to make up the list of videos which YouTube offers its users. These algorithms use various input and data to determine what exactly to offer you. Now a new study from the people at Mozilla has found that YouTube may actually be promoting violent content while violating the company’s own privacy policies.

Mozilla, with the help of 37,380 YouTube users, researched the matter. The volunteers, who Mozilla calls watch dogs, provided data and what is described as the “regrettable” experiences they had on YouTube. Mozilla says that this gave the organization insight into a pool of YouTube’s tightly-held data in the “largest-ever crowdsourced” investigation into YouTube’s algorithm. Collectively, the participants marked 3,362 of what Mozilla calls “regrettable” videos, coming from 91 countries, between July 2020 and May 2021.

Will you offer us a hand? Every gift, regardless of size, fuels our future.

Your critical contribution enables us to maintain our independence from shareholders or wealthy owners, allowing us to keep up reporting without bias. It means we can continue to make Jewish Business News available to everyone.

You can support us for as little as $1 via PayPal at office@jewishbusinessnews.com.

Thank you.

So what exactly did the Mozilla volunteers find? According to Mozilla they reported everything from Covid fear-mongering to political misinformation to wildly inappropriate “children’s” cartoons. The most frequent Regret categories are misinformation, violent or graphic content, hate speech, and spam/scams.

Apparently, 71% of all of the people who reported their findings said that they had problems with the automated recommendations offered by YouTube. They had problems with as much as 40% of all suggested videos.

“YouTube needs to admit their algorithm is designed in a way that harms and misinforms people,” says Brandi Geurkink, Mozilla’s Senior Manager of Advocacy. “Our research confirms that YouTube not only hosts, but actively recommends videos that violate its very own policies. We also now know that people in non-English speaking countries are the most likely to bear the brunt of YouTube’s out-of-control recommendation algorithm.”

“Mozilla hopes that these findings—which are just the tip of the iceberg—will convince the public and lawmakers of the urgent need for better transparency into YouTube’s AI,” he added.

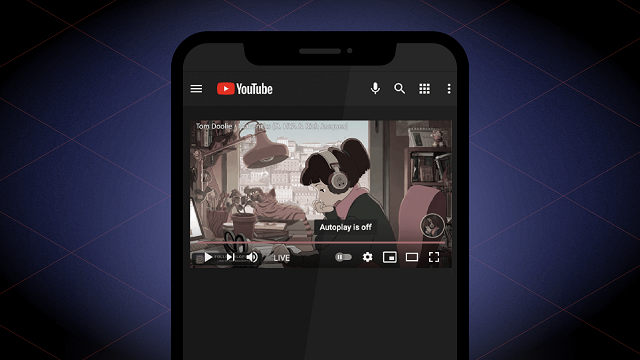

So what does Mozilla recommend that people do? Turn off Autoplay, which is one of YouTube’s automated features. The Autoplay feature is where YouTube comes up with a suggestion on what to watch next, after you video ends. But you will still have a problem every time that you click on any YouTube recommendation. The company uses these selections as part of its algorithm on what to offer users in the future. Once thing that you can do is to delete your search history. To do this, simply go to the “Settings” gear icon, tap “History & privacy” and then “Clear search history.”

You can also turn off notifications and use private browsing.

As for YouTube violating its own policies, Mozilla found that YouTube’s algorithm amplifies harmful, debunked, and inappropriate content. One of the volunteers who shared their data with Mozilla said that he watched videos about the U.S. military, and was then recommended a misogynistic video titled “Man humiliates feminist in viral video.” Another person watched a video about software rights, and was then recommended a video about gun rights. And a third person watched an Art Garfunkel music video, and was then recommended a highly-sensationalized political video titled “Trump Debate Moderator EXPOSED as having Deep Democrat Ties, Media Bias Reaches BREAKING Point.”

Mozilla states that the research uncovered numerous examples of hate speech, debunked political and scientific misinformation, and other categories of content that would likely violate YouTube’s Community Guidelines. It also uncovered many examples that paint a “more complicated picture of online harm.” Many of the videos reported may fall into the category of what YouTube calls “borderline content”—videos that “skirt the borders” of their Community Guidelines without actually violating them.